Their principles

In 2018 OpenAI charter mentioned AGI 12 times, new version mentions it twice

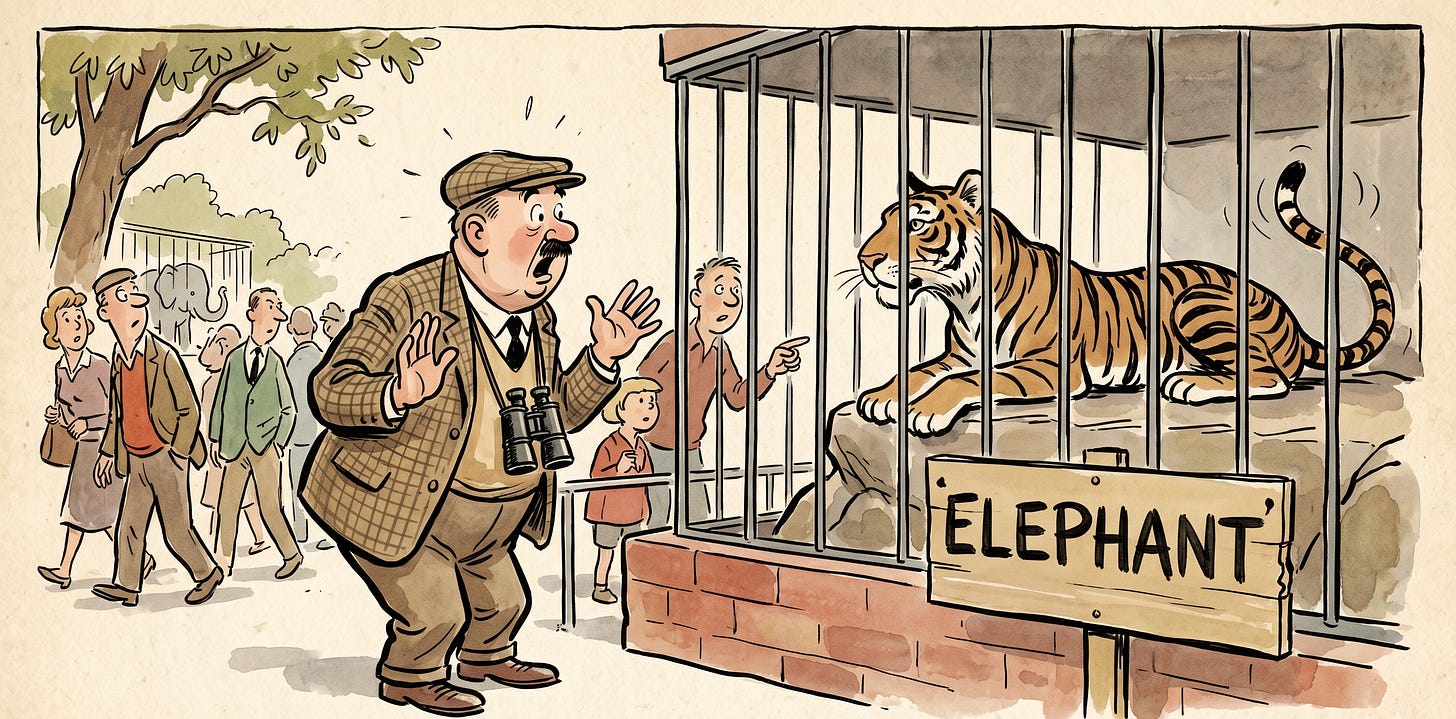

AGI was the whole reason OpenAI existed. The non-profit structure, the safety rhetoric, the recruiting pitch, the Musk money, the moral seriousness — all of it pointed at AGI as the north star. Now it’s downgraded to the footnote. The new organizing concept of “iterative deployment” sounds like a corporate version of FAFO.

The 2018 charter said:

We are concerned about late-stage AGI development becoming a competitive race without time for adequate safety precautions. Therefore, if a value-aligned, safety-conscious project comes close to building AGI before we do, we commit to stop competing with and start assisting this project.

Call it the merge clause. The promise that if somebody else got there first, OpenAI would stand aside and help. It was the single most distinctive thing in the document. OpenAI promised to lose on purpose if losing was the safer outcome.

It is gone. Not amended. Not re-scoped. Gone.

What replaces the merge clause is the line: we will be transparent about when, how, and why our operating principles change. The register is Politburo. The Five Year Plan was always the Five Year Plan; we have always been at war with Eastasia; principles evolve; comrades, do keep up.

The verbs tell the same story. The 2018 charter ran on we commit, we will, we expect — the verbs of a fiduciary. The 2026 document runs on we envision, we believe, we acknowledge — the verbs of a press release. There is one sentence I recommend rereading: we can imagine periods in the future where we have to trade off some empowerment for more resilience. That is the language of a company pre-announcing, in writing, the principle it will abandon next. The lawyers are worth every penny.

In 2018, the primary fiduciary duty was to humanity. In 2026, OpenAI is “a much larger force in the world than it was a few years ago.” Both sentences are true. They are not the same sentence.

None of this is scandalous. Companies grow up. Idealistic founders get diluted. Promises made when you are small and broke and irrelevant tend to be reread later, with embarrassment. The scandal would be pretending it isn’t happening. To their credit, the 2026 document does not pretend. It admits, almost touchingly, that the principles are principles until they aren’t.

Chekhov has a line about how if you describe a gun on the wall in act one, it must go off in act three. The 2018 charter put a gun on the wall. The 2026 charter quietly took it down and explained that the wall will be redecorated periodically, in response to evolving conditions, with full transparency.

Somewhere in San Francisco, the gun is in a drawer.

The gun is autonomous now